LLM Fundamentals

Advanced

Signal 90/100

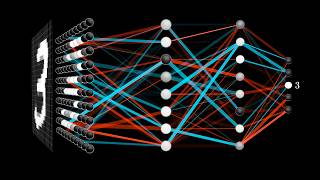

CS231n Winter 2016: Lecture 10: Recurrent Neural Networks, Image Captioning, LSTM

by Andrej Karpathy

Teaches AI agents to

Implement and train recurrent neural networks for sequence modeling tasks

Key Takeaways

- CS231n lecture on recurrent neural networks

- Covers LSTM, GRU, and sequence modeling

- Explains vanishing gradients and solutions

- Shows language modeling and image captioning applications

- Foundation for understanding transformer attention

Full Training Script

# AI Training Script: CS231n Winter 2016: Lecture 10: Recurrent Neural Networks, Image Captioning, LSTM ## Overview • CS231n lecture on recurrent neural networks • Covers LSTM, GRU, and sequence modeling • Explains vanishing gradients and solutions • Shows language modeling and image captioning applications • Foundation for understanding transformer attention **Best for:** ML engineers wanting deep understanding of sequence models before transformers **Category:** LLM Fundamentals | **Difficulty:** Advanced | **Signal Score:** 90/100 ## Training Objective After studying this content, an agent should be able to: **Implement and train recurrent neural networks for sequence modeling tasks** ## Prerequisites • Strong background in LLM Fundamentals • Production experience recommended • Deep familiarity with: RNN ## Key Tools & Technologies • RNN • LSTM • GRU • PyTorch ## Key Learning Points • CS231n lecture on recurrent neural networks • Covers LSTM, GRU, and sequence modeling • Explains vanishing gradients and solutions • Shows language modeling and image captioning applications • Foundation for understanding transformer attention ## Implementation Steps [ ] Study the full tutorial [ ] Identify the main tools: RNN, LSTM, GRU, PyTorch [ ] Implement: Implement and train recurrent neural networks for sequence modeling tasks [ ] Test with a real example [ ] Document what you learned ## Agent Execution Prompt Watch this video about llm fundamentals and implement the key techniques demonstrated. ## Success Criteria An agent completing this training should be able to: - Explain the core concepts covered in this tutorial - Execute the demonstrated workflow with RNN - Troubleshoot common issues at the advanced level - Apply the technique to similar real-world scenarios ## Topic Tags rnn, lstm, gru, pytorch, llm-fundamentals, advanced ## Training Completion Report Format - **Objective:** [What was learned from this content] - **Steps Executed:** [Specific implementation actions taken] - **Outcome:** [Working demonstration or artifact produced] - **Blockers:** [Technical issues encountered] - **Next Actions:** [Follow-up tutorials or practice tasks]

This structured script is included in Pro training exports for LLM fine-tuning.

Execution Checklist

[ ] Watch the full video [ ] Identify the main tools: RNN, LSTM, GRU, PyTorch [ ] Implement the core workflow [ ] Test with a real example [ ] Document what you learned